All your zero days are belong to us

A heap overflow sitting in the Linux kernel since 2003 wasn't hidden—it was just never identified. AI found it, and every assumption about old code being safe code is now wrong.

In February 2026, Nicholas Carlini at Anthropic ran a Claude model across a large sweep of open-source software. The model found a buffer overflow in the NFS code that had been sitting in the Linux kernel since 2003. It survived Heartbleed. It survived Spectre and Meltdown. Not only that, but it survived decades of kernel security audits.

AI didn’t find that bug by being smarter than the engineers who missed it. It found it by having no attention limit. That distinction invalidates the assumption that old code is safe code.

How old bugs survive

Human auditors sample. They fast-scan, pattern-match, and triage by severity. What they can’t do is hold the full protocol interaction state of a complex NFS implementation across thousands of lines simultaneously—tracking every code path without losing the thread.

Bugs like this one don’t survive because they’re well-hidden. They survive because the search space exceeds what any reviewer can hold. The NFSv4 code had been stable long enough that no one was reading it with fresh eyes. Time in production became a proxy for safety. The longer something ran without incident, the less reason to look hard.

That treatment was wrong the whole time. There wasn’t a tool that could prove it.

The search that changed

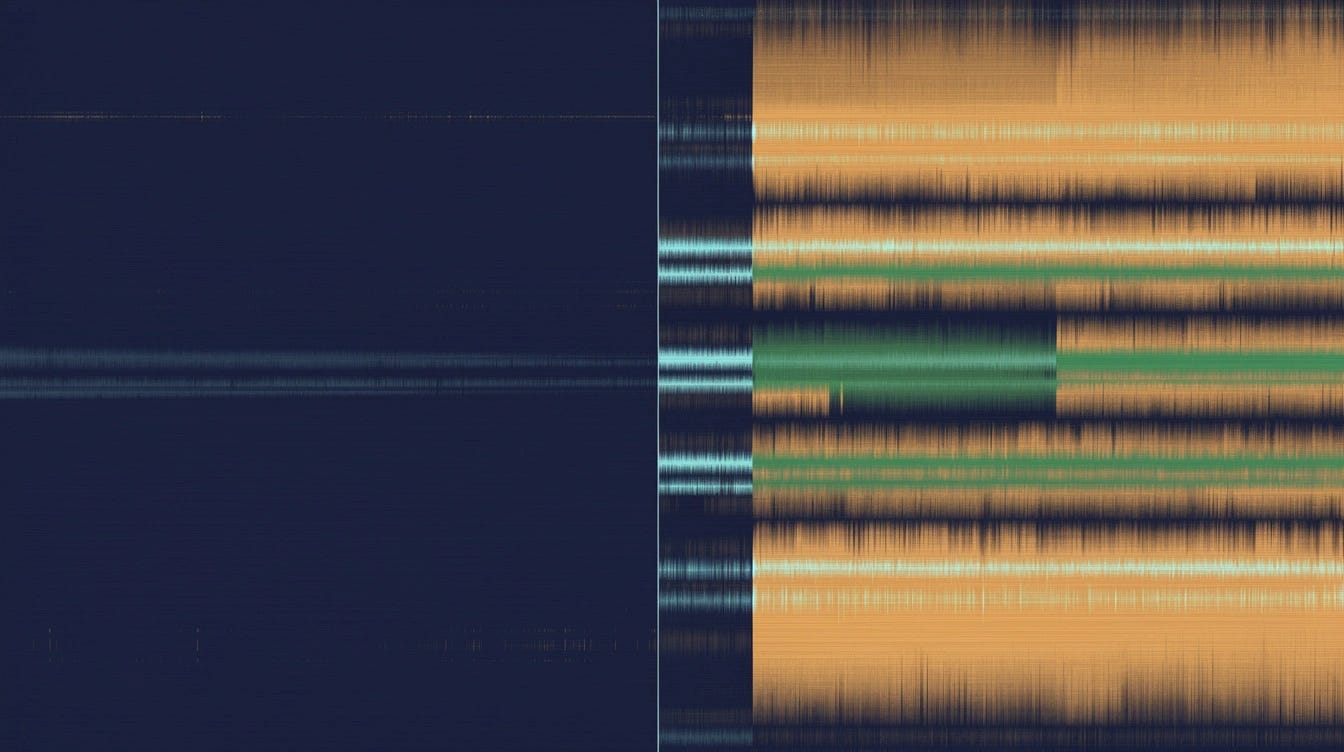

Claude didn’t sample. Carlini fed the model the history of past fixes—searching for similar patterns addressed in one location but not in adjacent code—then followed protocol interactions across the full codebase. The model held a state a human reviewer couldn’t sustain.

Carlini’s sweep didn’t stop at one kernel bug. According to Carlini’s published findings, it produced 500+ high-severity vulnerabilities across open-source software. Firefox alone yielded 22 CVEs. Mozilla’s response, in a public statement following disclosure: “Within hours, our platform engineers began landing fixes.”

The NFS bug survived for 23 years not because the code was impenetrable. The constraint was the bandwidth of the search. AI removed it.

The implication

The assumption that old code is safe because it has survived is gone.

Any codebase old enough to feel safe is now an unknown quantity—not because the code changed, but because the search capability did. “It’s been running for 20 years” meant something specific: humans looked at this code over time and didn’t find a critical flaw. That inference is no longer valid. An AI working through the same codebase in hours isn’t making the same kind of search.

The 2003 bug wasn’t hiding. There are more of them.

The double edge

Anthropic found the NFS bug responsibly and coordinated the patch before disclosure.

The model that ran the sweep is available to everyone. Anthropic’s own framing, in their published research accompanying the findings: “This is presaging an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.”

The counterargument is that defenders get the same tools. It’s true. The asymmetry is that attackers only need to find one flaw; defenders need to find all of them.

Responsible disclosure timelines run 90 days as a standard. That window was calibrated around human-speed exploitation—reverse-engineering a patch, building a working exploit, deploying it. AI collapses that window. A model capable of finding a 23-year-old vulnerability in a single sweep can, by the same mechanism, analyze a fresh patch and map the exploit surface in hours. Offensive deployment at scale is already the race.

What gets built instead

Point-in-time penetration testing is insufficient. It samples the way human auditors sample—scoped engagements, bounded time, bounded coverage. Continuous automated audit replaces it: always-on, running against every commit.

The disclosure economics have to be rebuilt. A 90-day window made sense when the threat was a human attacker with months of manual work ahead. It doesn’t make sense when the attacker’s toolchain runs the same sweep Carlini ran. Some projects will push for shorter windows. Others won’t be able to ship fixes in time. The tradeoff gets harder, not easier.

“Audited code” needs a new definition. The old one—reviewed by qualified humans, no known critical vulnerabilities—described a search that humans could actually execute. That search is now the floor, not the ceiling.

Anything running long enough to feel safe has to be reconsidered. Not because the threat model changed. Because the capability to find what was always there did.