Too big to fail, again

The banks got too big to fail, then got regulated into staying that way. Is AI next?

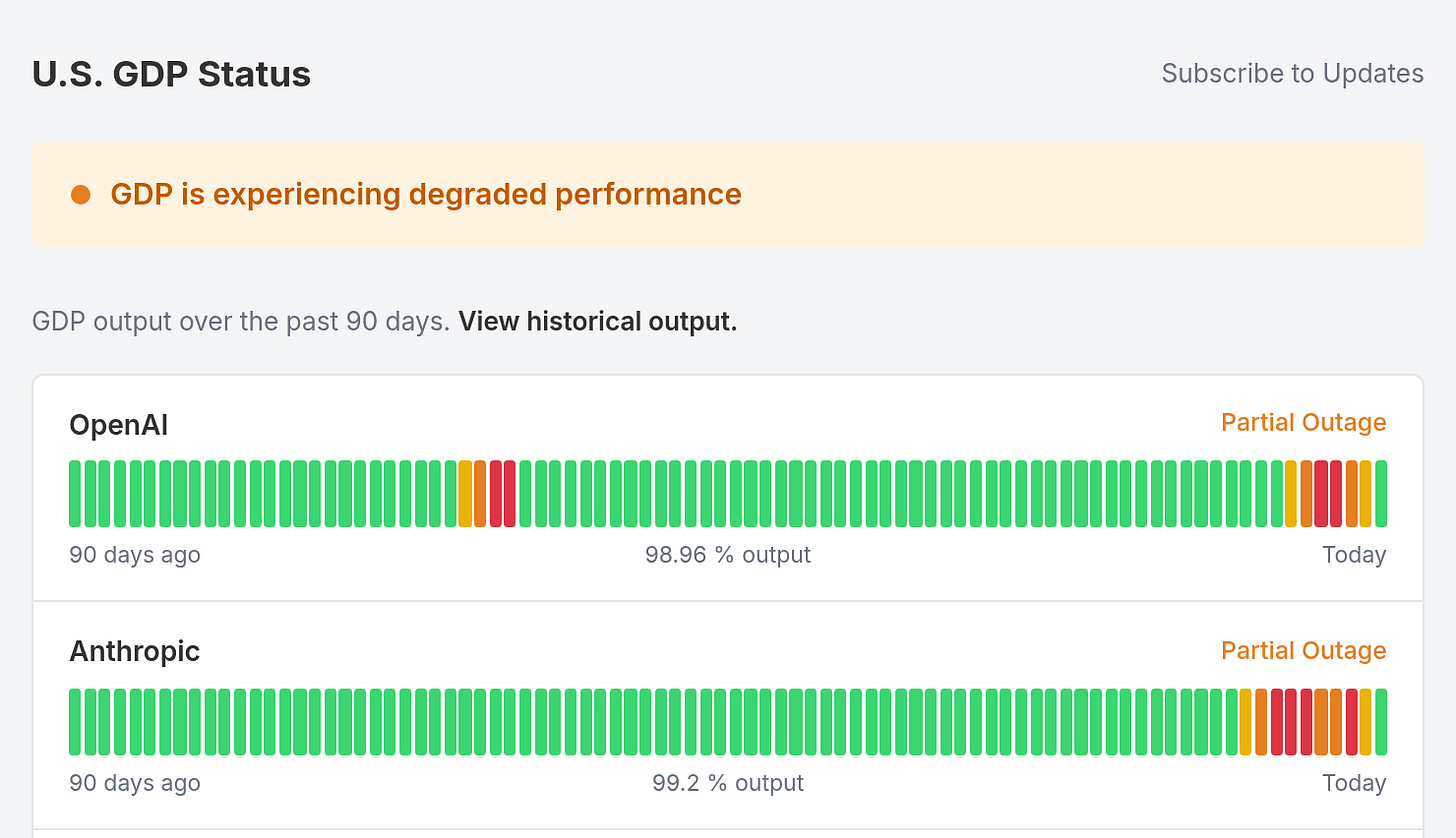

Claude went down three times in March. ChatGPT went down for two days in February—28,000 reports on Downdetector, developers idle, support queues backed up, and half-written blog posts stuck in draft. In both cases the services came back, everyone resumed, and nothing was recorded. No incident report with economic impact, regulatory filing, or systemic risk assessment. A few thousand tweets and a shrug.

In 2008, “too big to fail” described banks that had woven themselves so deep into the economy’s plumbing that their failure would cascade. The response was regulation—stress tests, capital requirements, systemic risk oversight. It didn’t fix concentration. It institutionalized it. The banks got bigger.

Eighteen years later, a different set of companies is becoming load-bearing. Not for capital flows. For cognitive work. And the same pattern is already forming.

OpenAI processes over two billion API calls per day across enterprise customers who’ve rebuilt operations around inference. Anthropic powers coding workflows, document processing, and customer service automation at companies that no longer have the headcount to do those tasks manually. Google DeepMind, Meta, Amazon Bedrock, xAI. Six providers, collectively, underpin a share of economic output that didn’t touch them two years ago.

The integration isn’t optional anymore. When a company replaces three junior analysts with a Claude pipeline, those analysts don’t sit in a break room waiting for the API to come back. They’re gone. The pipeline is the capacity. When the pipeline goes down, the capacity goes to zero. Not to “degraded,” not to “manual fallback.” Zero. The org doesn’t have the people to absorb the gap because the entire point was that it wouldn’t need them.

Most companies crossed the line from “uses AI” to “depends on AI” without noticing.

The fix isn’t regulation. Regulation is what got us here with the banks—it raised the compliance barrier, locked in the incumbents, and made the concentration permanent. The fix is competition. More providers, more open-source models good enough to run in production, and more companies that can switch when one provider goes down instead of going to zero.

But the market is moving in the other direction. OpenAI and Anthropic are building government partnerships, sitting in White House meetings, and shaping the safety frameworks that will determine who’s allowed to operate. The playbook is familiar: help write the rules, then benefit from the barriers those rules create. It’s Visa and Mastercard all over again—incumbents who love regulation because regulation is the moat.

Meta’s Llama is open-weight. DeepSeek proved you can build competitive models without a billion-dollar cluster. Mistral, Cohere, and dozens of smaller labs are shipping. The supply side of inference is more competitive than it looks from the headlines. But enterprise adoption is still concentrated in two or three providers because switching costs are real, and government-endorsed “safety” frameworks will worsen them.

Part of the problem is measurement. GDP is published quarterly by the Bureau of Economic Analysis, a number that’s already three months stale by the time anyone reads it. The economic impact of a three-hour Claude outage on a Tuesday afternoon in March doesn’t show up in GDP. It shows up in missed sprint goals, delayed publications, stalled deal reviews, and customer service queues that backed up for an afternoon. Real cost, scattered across thousands of organizations, invisible to the instruments we use to measure output.

The providers themselves publish uptime metrics in real time. 90-day graphs, incident histories, resolution timestamps. They track their own reliability at a granularity the economic measurement system can’t match. The data exists. Nobody’s connecting it to the thing it affects.

At what point does an LLM provider’s outage constitute a systemic economic event rather than a product issue? When 10,000 companies depend on it? 100,000? When does the lost output from a four-hour outage exceed the GDP of a small country?

Nobody’s asking because the people in a position to ask are the same people benefiting from the concentration. The answer isn’t a new regulatory body. The answer is a market where no single provider’s outage takes the economy offline—where switching is cheap, alternatives are production-grade, and the default is redundancy, not dependence.

U.S. GDP Status tracks six LLM providers as economic components. 90-day uptime bars. Incident reports with estimated dollar impact. Modeled on the status pages every cloud provider already publishes, because that’s what these companies have become. The data is illustrative, not live. The format is performance art. The premise isn’t.

The last time the economy built dependencies this deep, this fast, on this few institutions, the response was to regulate the incumbents and make the concentration permanent. The better response is to make the concentration unnecessary. The status page shows how far we are from that.