Agent-era infrastructure

Add an AI assistant, ship an MCP server, call it done. Most companies are building at the wrong layer.

In 1960, shipping a truckload of medicine from Chicago to an interior city in Europe cost $2,400—about $25,000 today.1 Half of that was spent covering ten miles on each end. Eight days to load. Eight days to unload. A dozen vendors touching every piece of cargo: truckers, railroads, port warehouses, steamship companies, customs, insurers, and freight forwarders. The distance wasn’t the expensive part. Every port, crane, warehouse, and customs form assumed a human had to handle every crate.

The shipping container didn’t fix any of those systems. It made them obsolete. Once the unit moving through the infrastructure changed—from individual cargo handled by longshoremen to sealed boxes handled by machines—every layer had to be rebuilt. Ports, cranes, trucks, railcars, insurance, customs, and labor contracts. The container was just a steel box. The rebuild took twenty years.

The assumption starting to break now is the same kind: the user is a person.

Every layer of the internet was built on it. Payments assume a legal entity. Discovery assumes someone is browsing. Identity assumes a government ID. Compute assumes someone renting capacity from a provider. These aren’t bugs. They’re architectural decisions that made sense when every session had a human at the keyboard.

The MCP ecosystem tells this story already: twenty thousand connectors in fourteen months. A third of the ecosystem is developer tools, databases, and search—developers wiring AI into what they already use. The layers agents need to operate on their own, finding services, proving identity, and paying for things, are either empty or weeks old.

That’s what an infrastructure transition looks like. Developers solve the developer problem first. The container was standardized before the cranes were rebuilt, before the ports were redesigned, and before the insurance contracts were rewritten. The protocol is the container. Everything underneath it is still the old port.

Where the agent runs

A research agent spins up, starts pulling data, and hits a wall at thirty seconds, the default timeout on most serverless functions. The job doesn’t pause, it dies. No partial output, no explanation. The user tries again and it dies again.

Serverless was built for web requests: fast in, fast out. An agent that audits a codebase or monitors a data feed needs minutes, sometimes hours. It needs to maintain state across dozens of tool calls and resume if something interrupts it. The twenty thousand MCP servers in the ecosystem are lightweight connectors, the same pattern as Lambda. Modal and Fly.io are building for longer-running, stateful workloads—agent-native compute. The gap between those two is where the next infrastructure companies get built.

How the agent talks to tools

MCP gave agents a standard protocol—one integration instead of a week of custom engineering per tool. Twenty thousand implementations in fourteen months suggests the protocol layer is converging fast.

But a protocol without the layers underneath it is a standard for connecting to tools you still find manually, authenticate with static keys, and pay for through human billing systems. MCP solved the integration problem. It didn’t solve the infrastructure problem.

How the agent finds things

Before containers, every shipment required a freight forwarder who knew which lines ran where, who had capacity, and what the rates were. That’s where agents are now.

The web has DNS and search engines. Agents have curated lists. Smithery, Glama, and a handful of registries index the ecosystem, but connecting an agent to a new tool still requires a developer who knows both systems exist. Somewhere on GitHub, someone built an MCP server that does exactly what your agent needs. Your agent will never find it. Neither will you, unless you already know it’s there.

There’s no lookup, no handshake, and no mechanism for an agent to discover capabilities it hasn’t been explicitly introduced to.

That’s the difference between a catalog and a market. A catalog requires someone to browse it. A market lets participants find each other. Every MCP deployment today is hand-assembled. A developer picks tools, writes config, and connects them. Scale is capped by developer hours, not by what’s available.

Whoever builds the discovery layer for agents builds the next great distribution platform.

Who the agent is

Every container carried a bill of lading—who shipped it, what authority, what insurance. The sealed box demanded a chain of custody. Agents don’t have one.

An agent books a flight on a corporate card. Nobody flagged it. Nobody approved it. When finance asks who authorized the charge, the model did, acting on behalf of a workflow triggered by a user who left the conversation three hours ago. That audit trail doesn’t exist.

The ecosystem isn’t built for this. More than half of MCP servers authenticate with static API keys, tokens that never expire, can’t be scoped, and sit in plain text. Anthropic’s early examples used them, developers followed, and nobody went back. Non-human identities already outnumber human ones 82 to 1 in enterprises.

Agents don’t need logins. They need delegation chains—records of which agent acted, on whose behalf, within what permissions, and at what time. It’s one of the most interesting unsolved problems in the stack.

No audit trail, no enterprise deal.

How the agent pays

Containerization collapsed dozens of per-handoff charges into a single through-rate. Overnight, it became economical to ship goods that weren’t worth shipping before. Agent transactions have the opposite concern: the minimum charge is higher than the value of what’s moving.

An agent queries a weather API, checks a freight rate, and pulls a compliance record. Total cost: $0.003. Stripe’s minimum processing fee: $0.30. A hundred times the transaction.

Lightning Labs shipped an agent payment toolkit last month, framing it as infrastructure for a “machine-payable web.” Bitcoin's Lightning Network handles sub-cent transactions natively, settles instantly, and doesn't care whether the sender is a person or a script. Stripe and Coinbase are building their own agent payment layers. Two competing protocols—x402 and L402—are already making opposite bets on whether machine-to-machine payments need intermediaries at all.

Fifteen payment integrations in an ecosystem of twenty thousand. Plenty of open questions.

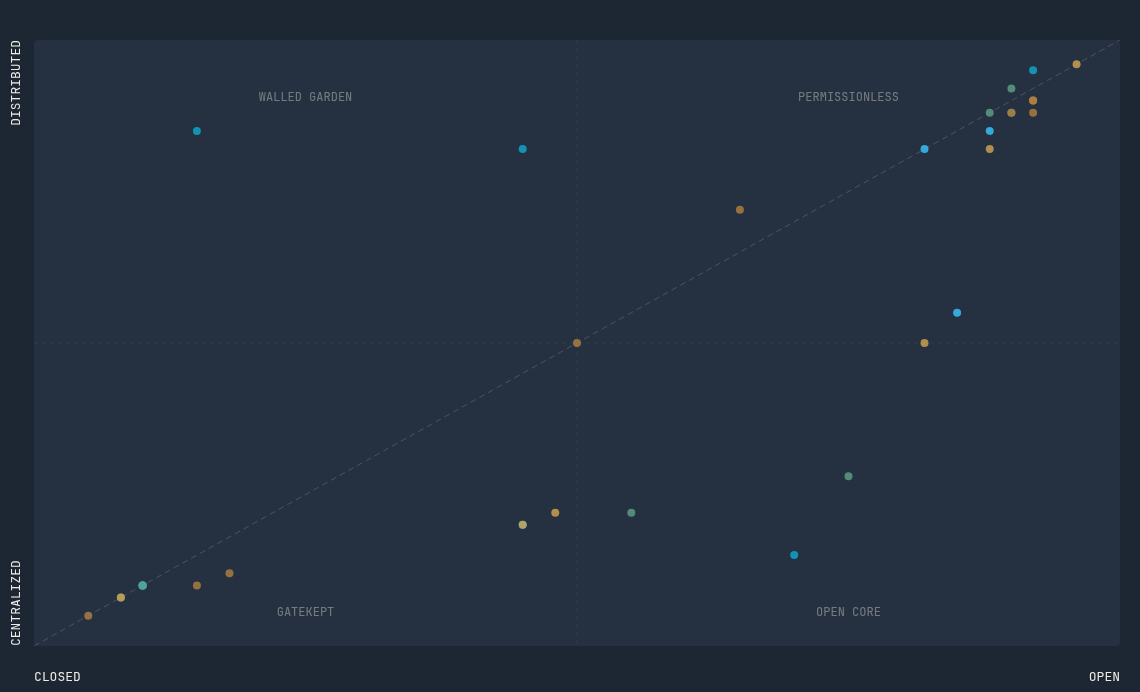

The container didn't improve the ports. It changed what moved through them, and the ports had to be rebuilt from scratch. That rebuild is starting now. To get a sense of what it looks like, I scored a few dozen companies and protocols on how open and how distributed they are across all five layers, an infrastructure map.

What jumped out: protocols are converging, but everything else is scattered. Compute is fragmenting across a dozen approaches. Payments is the most wide-open layer in the stack. Identity is bifurcated: enterprise SSO on one end, raw keypairs on the other, and almost nothing in between. Discovery has five competing models and no convergence at all.

Every one of those layers is an infrastructure company waiting to be built—for a user who never opens a browser.

This is the first piece in a series on agent-era infrastructure—the layers that have to be rebuilt when the user isn’t human:

Compute—agents need to run for hours. Serverless gives them thirty seconds.

Protocols—MCP solved integration. It didn’t solve infrastructure.

Discovery—whoever controls this layer controls distribution.

Identity—an agent acts, and nobody can say who authorized it.

Payments—$0.003 on $0.30 rails.

One layer at a time.

Marc Levinson, The Box: How the Shipping Container Made the World Smaller and the World Economy Bigger (Princeton University Press, 2006).